From OLTP to OLAP: Designing a Modern Data Stack for Enterprise Agility

By: The Data Engineering Team at DataSOS Technologies

There is a predictable breaking point in every growing, data-driven company. It happens most often in a crucial business moment: A marketing team runs a huge, complex query to analyse three years of customer behaviour, and suddenly the customer-facing application crashes.

Why? The reason is that the engineering team allows analytical queries to be run on the production database.

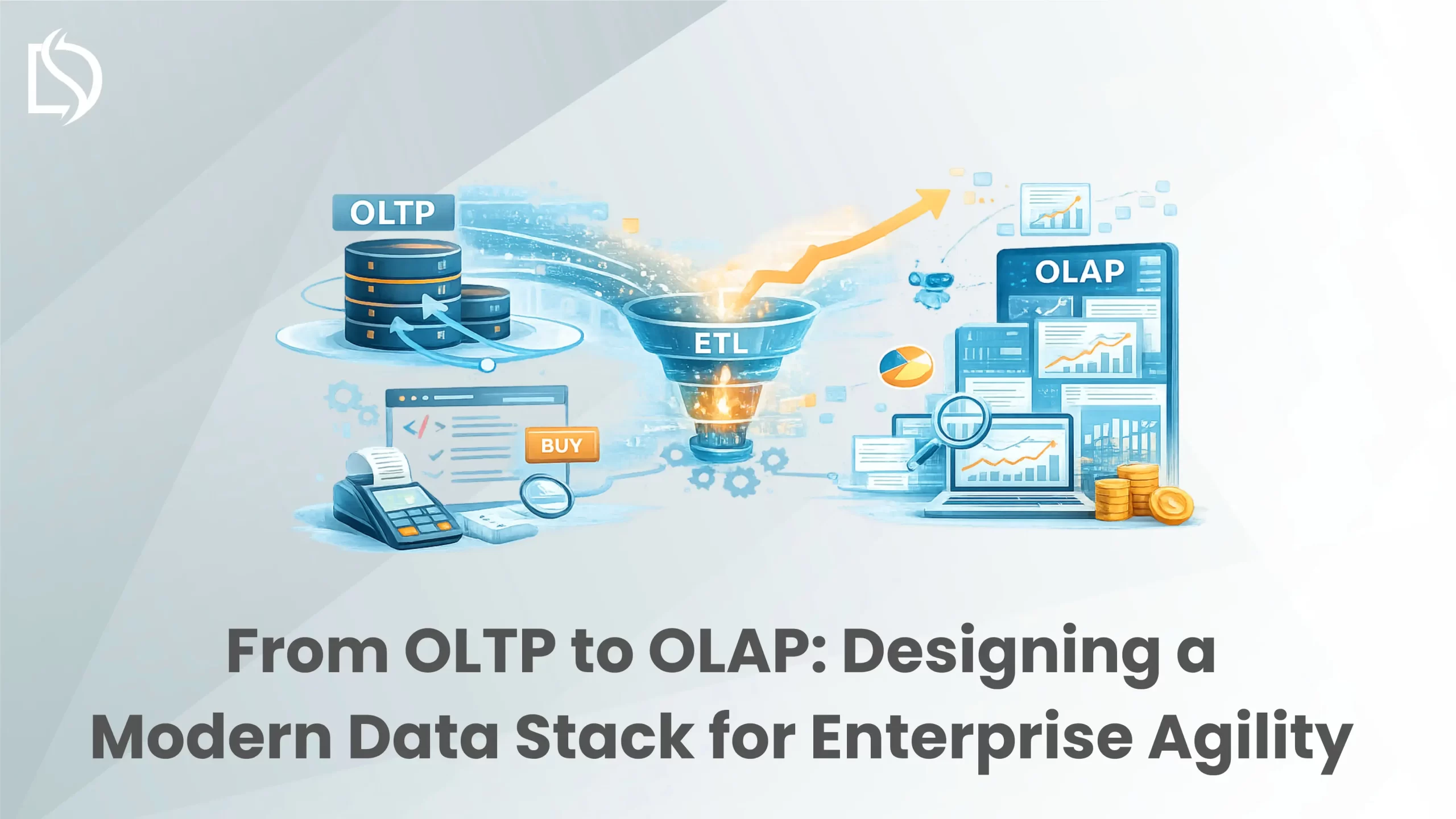

The separation of transactional systems from analytical systems is the first fundamental step for CTOs and Lead Data Engineers when scaling an enterprise. OLTP is the move from online transaction processing to online analytical processing.

At DataSOS Technologies, we build high-throughput data pipelines that process over 15 billion data points per month, thereby assisting finance, e-commerce and healthcare clients in untangling their legacy databases and building Modern Data Stacks that deliver zero-latency business intelligence.

Here is an engineer’s guide to why you need to separate these environments and how to design a modern, scalable data stack.

The Bottleneck: Why OLTP Fails at Analytics

The primary database of your application, whether it’s PostgreSQL, MySQL or a NoSQL solution like MongoDB, is built for OLTP.

OLTP systems are built for the frontline. They perform very large volumes of very simple, rapid transactions: A customer placing an order, a user updating their profile, or a sensor logging a temperature reading.

- The Architecture: OLTP databases are normally organised to reduce redundancy of data and use row-based storage. They consider ACID compliance as a top priority for data integrity during simultaneous updates.

- The Problem: The normalised data is split across dozens of tables, so historical analysis involves huge computationally expensive JOIN operations. ONE VERY complex analytical query can lock tables, use all available CPU and stop your production app.

OLTP was designed to run your business operations. It was not built around analysing them.

The Destination: The Power of OLAP

Years of historical data need a dedicated environment to analyse without affecting production: an OLAP system, often called a Data Warehouse (e.g. Snowflake, Amazon Redshift, Google BigQuery).

- The Architecture: OLAP systems use columnar instead of row-based storage. An OLAP database scans only the “price” column if you want to calculate the average sale price over millions of rows.

- The Advantage: OLAP systems can assemble billions of rows in milliseconds. They are denormalised, meaning data is structured to speed up reading and analysis.

Offloading your heavy analytical workloads to an OLAP system makes your production OLTP database lightning fast while your business analysts get their reports immediately surfaced through Data Analysis and Visualization dashboards.

Designing the Modern Data Stack: The Architecture

Moving data from OLTP systems to an OLAP warehouse requires a robust architecture known as the Modern Data Stack. It is not a single tool, but a modular ecosystem designed for agility and scale.

Here are the core components of a modern architecture:

1. Ingestion: Extracting the Raw Material

Data doesn’t just live in your OLTP database. It lives in your CRM, your marketing platforms, and increasingly, on external websites.

- Internal Ingestion: Using Change Data Capture (CDC) or batch extraction to pull data from your application databases without impacting performance.

- External Ingestion (Web Scraping): This is a critical blind spot for many enterprises. Your internal data only tells half the story. To gain real market intelligence, you need to ingest external data competitor pricing, real estate listings, or public compliance data. At DataSOS, we engineer resilient web scraping pipelines that bypass advanced anti-bot defences to funnel high-volume, external unstructured data directly into your stack.

2. The Shift from ETL to ELT

Historically, data was extracted, transformed (cleaned and modelled on a separate server), and then loaded into the warehouse (ETL). With the massive computing power of modern cloud warehouses (AWS, Azure), the paradigm has shifted to ELT (Extract, Load, Transform).

- Data is extracted and loaded raw into the data warehouse.

- Transformations are executed directly inside the OLAP environment using the warehouse’s own scalable compute power (often utilising SQL-based tools like dbt). This prevents data loss and allows engineers to rebuild transformation models at any time from the raw, historical data.

3. Data Transformation and Modelling

Raw data is messy. Dates are formatted incorrectly, currencies conflict, and duplicates abound. In this layer, data is cleansed, validated, and joined into clean, structured tables (often using Star or Snowflake schemas). This ensures that when the finance team pulls a report, they are looking at a “single source of truth.”

4. BI and Visualisation: The Actionable Layer

Once the data is structured within the OLAP warehouse, it is pushed to Business Intelligence (BI) tools.

- Static spreadsheets are replaced by dynamic, real-time dashboards using tools like Tableau and Microsoft Power BI.

- This is where operations managers and executives gain visibility, enabling faster, evidence-based strategic decisions without manual crunching.

The DataSOS Approach: Engineering for Zero Latency

Building a Modern Data Stack sounds straightforward in theory, but in practice, data pipelines are notoriously fragile. A slight schema change in your OLTP database can break your ingestion script; a sudden spike in web traffic can cause data latency; fragmented information can lead to silent errors in your BI dashboards.

At DataSOS Technologies, we don’t just set up tools; we engineer custom software infrastructure. Our approach to ETL / ELT Data Processing is built for enterprise resilience:

- Fault-Tolerant Pipelines: We architect high-throughput systems that cleanse and validate fragmented information on the fly. We build with zero data loss or latency in mind, ensuring reliable delivery to downstream applications.

- Complex Data Integration: We seamlessly blend your internal OLTP data with highly complex external data gathered through our proprietary web scraping networks.

- Technology Agnostic, Architecturally Sound: Whether your environment requires Python, Node.js, SQL/NoSQL, AWS, or Azure, we deploy the right foundational technologies for billion-point workloads.

Stop letting fragmented databases and manual spreadsheet crunching hold back your growth. Transform your raw operational data into a strategic differentiator.

Ready to build a data infrastructure that scales with your ambition? Schedule your free consultation with DataSOS Technologies today and see how we engineer the modern data stack.

SUBSCRIBE TO OUR NEWSLETTER

RECENT POSTS

-

From OLTP to OLAP: Designing a Modern Data Stack for Enterprise Agility

From OLTP to OLAP: Designing a Modern Data Stack for Enterprise Agility -

The Impact of AI in Bulk Data Scraping and the Rise of Autonomous Agents

The Impact of AI in Bulk Data Scraping and the Rise of Autonomous Agents -

The Role of Real Estate Data in Decision Making

The Role of Real Estate Data in Decision Making -

Architecting Proxy Chains for Distributed Traffic Flow in Akamai-Protected Environments

Architecting Proxy Chains for Distributed Traffic Flow in Akamai-Protected Environments -

How to Navigate Cloudflare in 2026?

How to Navigate Cloudflare in 2026?